AI Overviews Optimization: Why Your “Featured Answer” Plan Backfires (Sometimes)

If you’ve been chasing AI Overviews like they’re the new #1 ranking, we get it. It’s shiny, prominent, and feels like free authority. The reverse-angle lesson is the one nobody puts on the slide: being summarized is not the same as being chosen. Overviews compress nuance. Compression changes meaning. And if your source pages leak weak claims, the model will summarize you into a one-line objection that scales far better than your marketing copy.

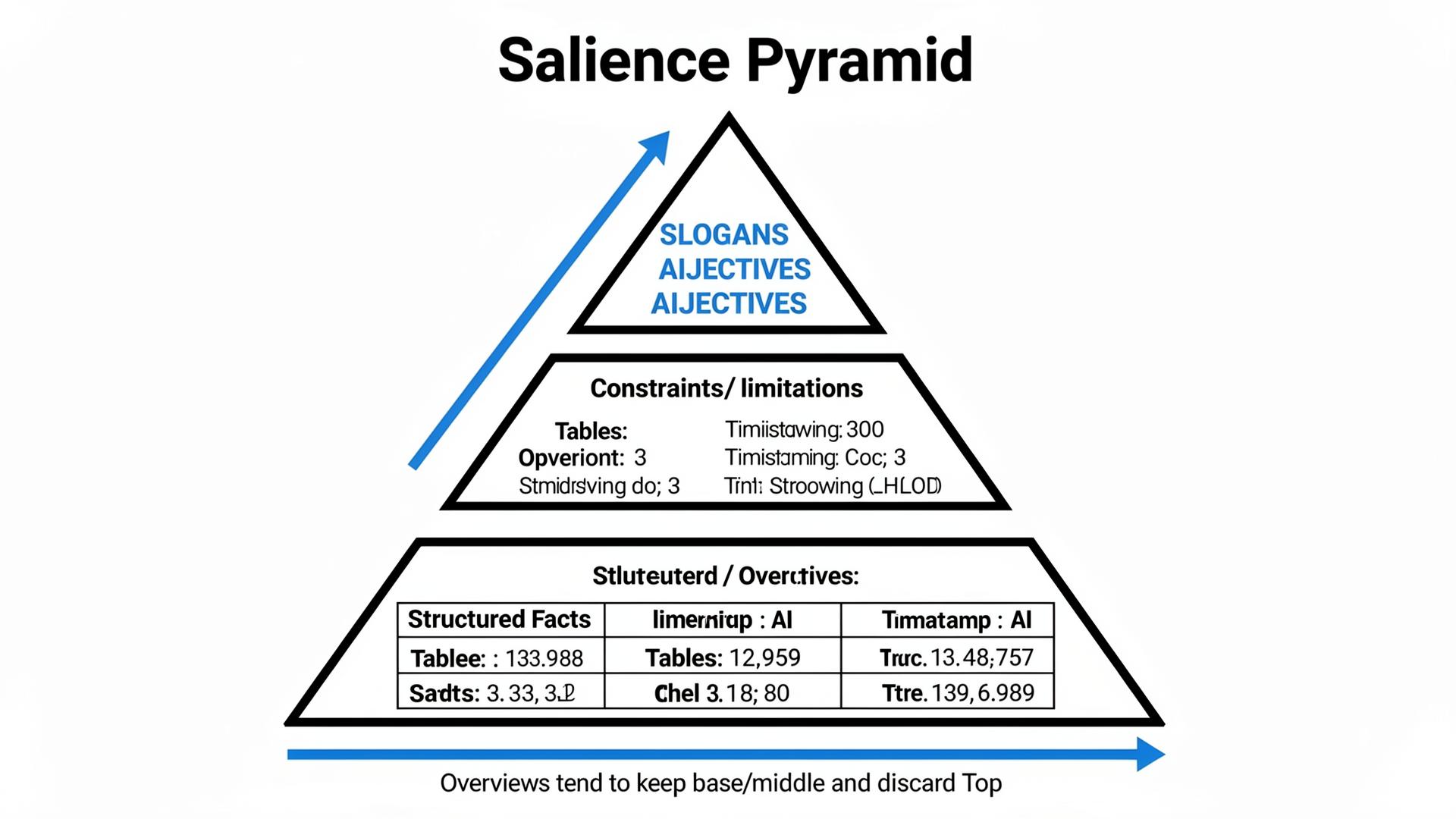

We (Lumonix) treat Overview optimization like working with a harsh editor. The editor will keep the most “salient” bits—often constraints, prices, and caveats—and throw away the rest. Your job is to decide what the editor will find first, what it can safely ground, and what it should not invent on your behalf.

Key Takeaways (Why Overviews Can Hurt Even When They Help)

Overviews reward clarity, not persuasion

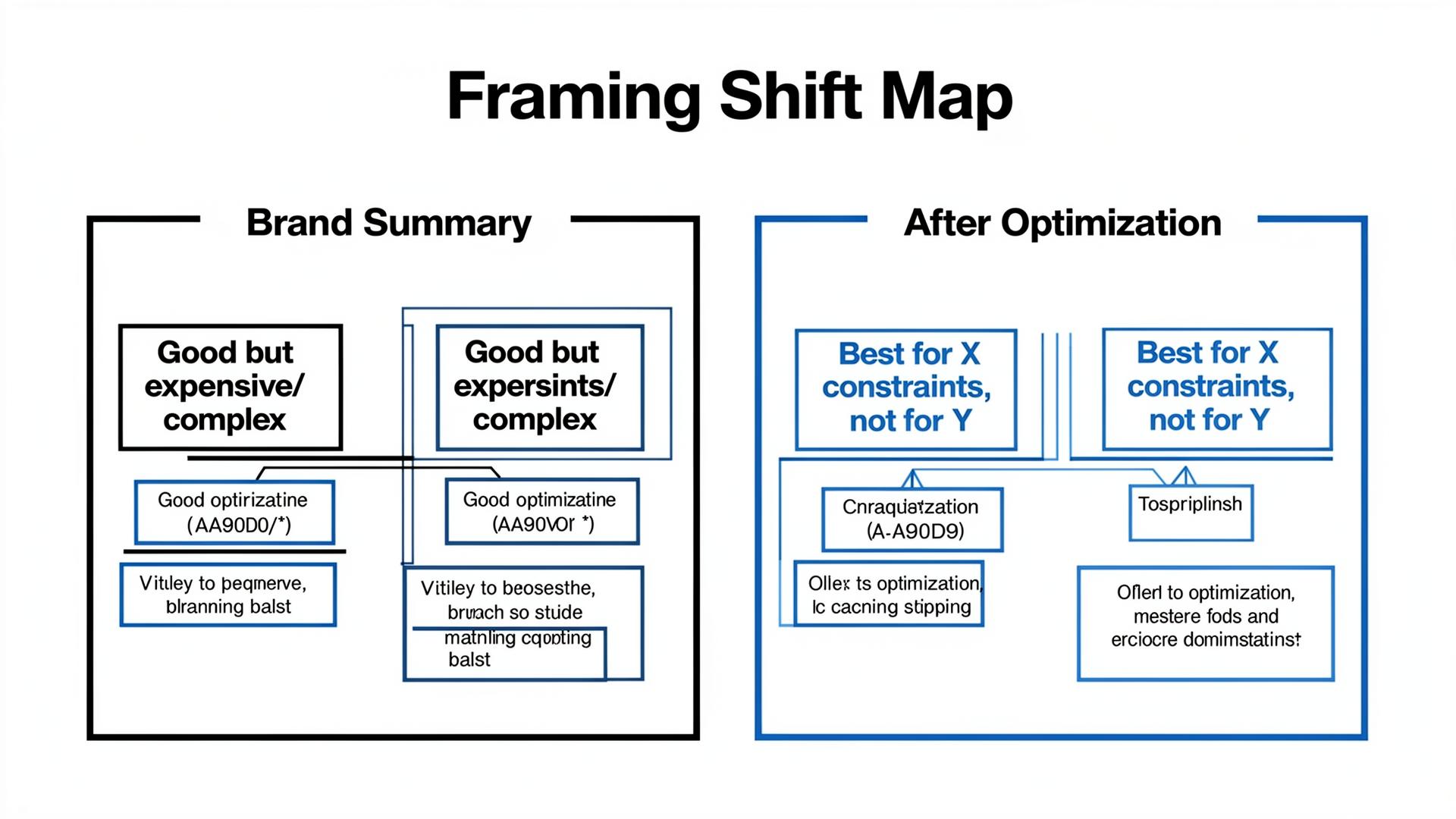

Clarity sounds like a virtue until you realize the model will choose which clear claims represent you. If your clearest claim is “enterprise-grade,” but the web also contains “hard to set up,” the model may compress you into: “powerful but complex.” Congratulations—clear. Unfortunately, that’s a sales objection.

Optimization can amplify objections if your sources are inconsistent

When models see conflicting facts, they hedge. When they see negative sources with strong structure (reviews, directories), they “balance” your claims. The more you push for visibility without fixing source contradictions, the more you amplify the balancing act. You get surfaced more often with a caveat attached.

The best Overview pages are boring on purpose

Boring is: constraints tables, policy clarity, uptime numbers, integration lists, and “who we are not for.” Those are the pieces the model can ground without guessing. If your “Overview optimization” plan is just “write more,” you are feeding the editor more fluff to delete.

Visibility vs Selection: The Uncomfortable Matrix

Why “appearing” can reduce purchase intent

Overviews are high-salience surfaces. They don’t just distribute your message; they distribute the model’s interpretation of your message. If the model’s interpretation frames you as expensive, risky, or complex, you can increase impressions while decreasing selection probability. That is the trap.

Matrix table: what teams do, how it backfires, what we do instead

| Goal | What teams do | How it backfires | Lumonix alternative |

|---|---|---|---|

| Appear more | Publish generic “best practices” and long helpful prose | Model surfaces you as the generic option; differentiation collapses | Publish decision criteria + constraints; define who you’re not for |

| Increase trust | Overclaim and remove caveats | Model “balances” with negative sources; objection framing rises | Use verifiable facts + timestamps; align external nodes to reduce contradictions |

| Drive selection | Optimize one “featured answer” query | Improves a vanity intent; buying prompts stay unchanged | Portfolio approach: optimize comparison and transactional clusters with tables |

| Reduce objections | Hide pricing/policies to avoid controversy | Model guesses; “unknown” becomes “risky,” and you lose by default | Publish explicit boundaries and policy truth blocks the model can ground |

The Technical Layer: How Overviews Are Assembled in RAG-like Pipelines

Overviews are salience filters plus grounding filters

In practice, an Overview is often produced by a pipeline that retrieves candidate sources, selects chunks, then summarizes with a salience bias: what is repeated across sources, what is structured, what is easy to quote, and what seems safe to assert. This is why review directories and policy pages (if structured) can outrank your glossy narrative in influence.

Semantic Density, Entity Resolution, Contextual Grounding—why Overviews pick “the wrong sentence”

Semantic Density matters because high-density chunks (tables, constraints, numbers) get selected and summarized more reliably than low-density prose. Entity Resolution matters because contradictions across sources trigger hedging or balancing language. Contextual Grounding matters because the model needs evidence for summary claims; when your site doesn’t provide groundable facts, the system leans on external nodes—even if those nodes are unflattering.

If you want to “optimize Overviews,” you must design for what the system can ground. That means publishing constraints and proofs you would normally hide because marketing finds them “too specific.” In 2026, specificity is not optional. It’s trust.

“The model isn’t trying to promote you. It’s trying to reduce user effort. If your content increases ambiguity, it will be summarized into the most boring—and sometimes most damaging—interpretation.”

Lumonix CTO — Expert Insight

Lumonix Lab Case Study (Synthetic): “We Won Overviews” and Still Lost the Deal

Baseline: high Overview presence, low qualified inclusion

A fintech vendor (call them “LedgerLane”) celebrated because their brand appeared in Overviews for broad “best X software” prompts. Sales, however, saw an increase in stalled deals. In our Lumonix Lab portfolio of 90 commercial prompts, LedgerLane’s qualified inclusion rate was 8%, and “good but expensive” framing appeared in 26% of runs. Their Overview presence did not translate to selection; it translated to faster objection formation.

Step 1: Identify the objection sentences the Overview keeps reusing

We extracted the repeated summary phrases across reruns and clustered them. The top offenders were not surprising: pricing ambiguity, implementation complexity, and unclear boundary conditions. The Overview kept the caveats because the caveats were the most consistent, groundable fragments across sources.

Step 2: Publish constraint-aware truth blocks and synchronize external nodes

We shipped a pricing boundaries table and an implementation constraints section (what is easy, what requires support, what is not supported), then updated two directory listings and a partner comparison page to mirror the same constraints language. Entity resolution improved because contradictions reduced. Semantic density improved because the key facts were now early and structured.

Step 3: Re-run portfolios and measure framing, not just visibility

After five weeks, qualified inclusion rose from 8% to 19%, and “good but expensive” framing dropped from 26% to 14%. The brand appeared slightly less often in top-of-funnel Overviews, but it was recommended more often in buying contexts. That trade is what mature teams choose.

Common Wisdom vs. Reality (Three Bad Overview Tactics)

Common wisdom: “Write longer pages so the model has more to work with”

Longer pages often reduce semantic density in the top chunks. Reality is that Overviews keep what’s structured and repeated. If you bury your constraints, the model will summarize someone else’s constraints about you.

Common wisdom: “Remove caveats; sound confident”

Removing caveats forces the model to reintroduce them from external sources. Reality is that you want to publish your own limitations in a grounded way, so the model can quote you instead of quoting a reviewer.

Common wisdom: “Optimize one ‘featured answer’ keyword”

Overviews are not one query; they’re an intent universe. Reality is portfolio optimization: comparison and transactional clusters matter more than one vanity definition prompt.

Overview-Safe Patterns: What to Publish So the Model Summarizes You Correctly

Write your own caveats (so reviewers don’t write them for you)

Most brands hide limitations because they fear objections. Overviews punish that fear by filling gaps with external sources. Publishing an explicit limitations and constraints block—grounded in your own pages with timestamps—often reduces negative balancing. It doesn’t eliminate objections; it makes them precise and controllable.

Design salience: make the “right” sentence the easiest sentence to keep

Overviews keep what is structured and repeated. If you want the model to keep “best for X constraints,” you must publish X constraints as a table or compact block early, then make that language consistent across your key external nodes. This is the quiet difference between being featured as a warning and being featured as a recommendation.

Audit the external “balancing sources” that Overviews use against you

Overviews often behave like cautious journalists: if your site is enthusiastic and the web contains skepticism, the summary will include the skepticism. You don’t fix that by becoming louder. You fix it by finding the two or three sources the system repeatedly uses to balance your claims—directories, review roundups, forum threads—and either correcting factual issues, publishing more groundable proof on your own canonical pages, or changing the way you frame constraints so the model stops reaching for the harshest interpretation.

FAQ

What should we measure for AI Overviews if clicks are down?

Measure qualified inclusion and framing: recommended vs caveat vs excluded, plus objection rate and source stability. Overviews can drive influence without traffic; your metric must survive that reality.

What’s the fastest Overview fix that isn’t “write more”?

Publish a constraints table and a canonical fact sheet with timestamps, then synchronize the external nodes that the Overview already uses. The fastest wins are often removing ambiguity, not adding content.

A question to debate

Would you rather appear in Overviews more often—or appear slightly less often but be framed in a way that increases selection in the moments buyers decide?