AI Rank Tracking vs Legacy SEO Trackers: Why the Old Tools Keep Lying (Politely)

Old-school rank tracking is comforting: keyword → position → chart → dopamine. AI answer tracking is not comforting, because the “position” is a moving target and the output changes with prompt phrasing, model updates, and retrieved sources. The reverse-angle truth is blunt: most “AI ranking” graphs are precision theater. They look scientific, but they’re built on assumptions that stopped being true the moment answers became generated narratives.

We (Lumonix) don’t think “rank” is useless. We think “rank” is an overloaded word that hides the real measurement job. In 2026, the job is to measure distribution (are you included), framing (how you’re presented), sources (what evidence is used), and stability (does the win stick under drift). If your tracker can’t do those, it will politely lie to you with tidy lines.

Key Takeaways (What a real AI tracking system must admit)

One prompt is not a measurement; it’s a vibe check

Prompt sensitivity is not an edge case. It’s the system. If your measurement can’t survive synonyms, constraints, and real buyer phrasing, it’s not measuring performance. It’s measuring a single sentence. The minimum bar is a portfolio with variants and reruns.

“Mentions up” can mean “the wrong story is repeating faster”

Legacy trackers trained teams to worship visibility. In model answers, visibility can scale an objection. A tracker must separate inclusion from framing: recommended vs caveat vs excluded, plus the objection taxonomy that predicts conversion friction.

Good tracking isolates what changed (prompt mix, sources, or reality)

When results move, you need to know whether you changed something, the model changed something, or the sources changed something. Otherwise you will “optimize” by shipping content into a drift storm and calling it strategy.

Deterministic SERPs vs Probabilistic Answers: Why Legacy Tools Break

Legacy tracking assumes stable ordering; answers don’t have ordering

SERPs are lists. Generated answers are narratives with citations (sometimes). Reducing a narrative to a single rank is a lossy compression that destroys the diagnostic signal. A tracker that outputs a single number is telling you “something happened,” not “what to fix.”

Comparison table: what changes, what doesn’t, what gets misread

| Dimension | Legacy rank tracking | AI answer tracking (what you need) | Typical failure |

|---|---|---|---|

| Output | Stable list of links | Generated narrative + citations + framing | Tool collapses narrative into “rank” and hides objections. |

| Noise | Manageable | High (prompt variance, model updates, source drift) | Dashboard hides variance; calls it “trend.” |

| Evidence | Links are the product | Sources are the lever | Tool ignores citations and can’t explain why you changed. |

| Fix path | On-page + links | Retrieval targets + entity consistency + truth layer | Tool can’t map actions to sources, so teams ship random content. |

The Technical Layer: What Models Trackers Must Account For

RAG means sources can change without you changing anything

In RAG-style systems, retrieval selects sources from an ecosystem that evolves. A directory updates its table. A forum thread goes viral. A policy PDF gets cached. The model’s output shifts because the evidence shifted. A tracker that doesn’t track source deltas is blind to the real cause of change.

Semantic Density, Entity Resolution, Contextual Grounding—why “rank” is the wrong unit

Semantic Density determines whether retrieved chunks contain quotable decision criteria. Entity Resolution determines whether contradictions trigger hedging or exclusion. Contextual Grounding determines whether claims are backed by stable evidence or drift into hallucination. None of those can be summarized by a single rank without losing the fix path.

“If the system can’t tell you what changed—prompt mix, retrieval set, or source citations—then it can’t tell you what to do next. It’s just a fancy weather app.”

Lumonix CTO — Expert Insight

Lumonix Lab Case Study (Synthetic): The Chart Went Up. The Pipeline Didn’t.

Baseline: “rank improved,” but citations and framing degraded

A travel SaaS vendor (call them “RoutePilot”) used a legacy-style tracker for AI answers. The tool reported “rank #2” for a handful of prompts and showed upward charts. Sales performance stayed flat, and customer calls increasingly opened with: “Are you actually available in region X?” The model had started citing a forum thread that implied limited regional support.

Step 1: Replace “rank” with a measurement stack (inclusion, framing, sources, stability)

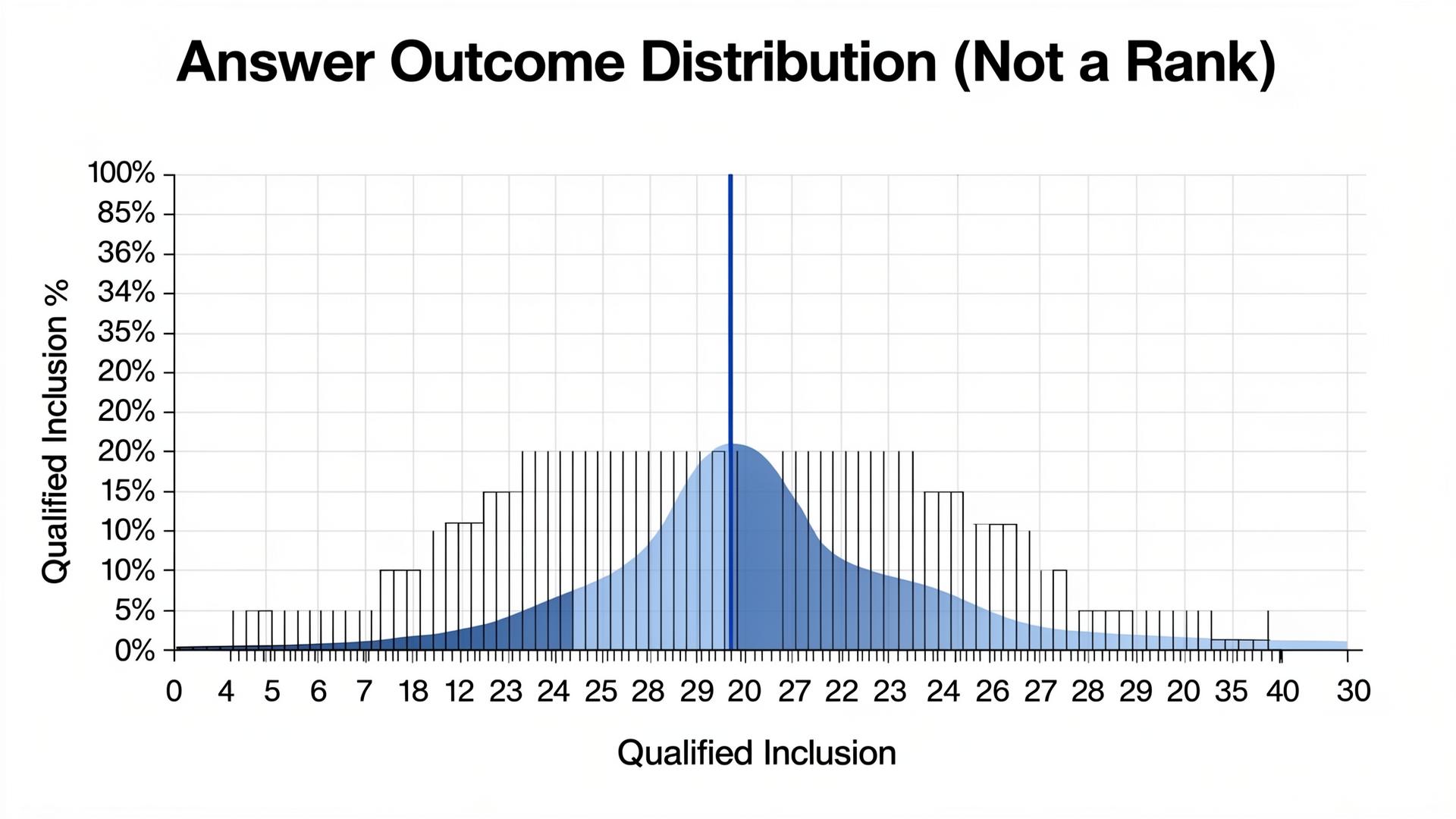

We rebuilt tracking across a 100-prompt portfolio with variants and reruns. Qualified inclusion in transactional prompts was 9%. Objection rate (“limited regions”) appeared in 18% of runs. Source mapping showed the objection was grounded in two external nodes, not the company’s site. The “rank” graph had hidden all of this.

Step 2: Ship a constraints truth layer and synchronize external nodes

We published a region coverage table with explicit boundaries and timestamps, then corrected the top-cited external nodes to match. We improved internal linking so the page became a retrieval target. Entity resolution improved because the ecosystem stopped disagreeing about coverage.

Step 3: Verify durability under reruns and source drift

After four weeks, qualified inclusion in transactional prompts rose from 9% to 20%, objection rate dropped from 18% to 10%, and source stability improved as the canonical table became a consistent grounding target. The “rank” metric didn’t become true; it became irrelevant.

Common Wisdom vs. Reality (Why Tracking Keeps Lying)

Common wisdom: “Weekly snapshots are enough”

Weekly snapshots miss drift spikes and source shifts. Reality is that you need continuous sampling (even lightweight) plus thresholds for high-impact wrongness and objection spikes.

Common wisdom: “One number makes reporting easier”

One number makes reporting easier and fixing impossible. Reality is that decomposition is the product: show what moved (sources, framing, inclusion) so you can change it.

Common wisdom: “Ignore citations; they’re inconsistent anyway”

Citations and sources are your fix path. Reality is that inconsistent citations are not an annoyance; they’re a diagnostic signal that your grounding targets are unstable.

Implementation Notes: Building a Portfolio That Doesn’t Lie

Prompt weighting and refresh cadence (because buyers don’t speak like your marketing team)

A portfolio is only honest if it mirrors your funnel. Discovery prompts are useful for early warning, but comparison and transactional prompts are where objections and selection show up. Refresh cadence matters because drift is real: add new variants monthly, rotate synonyms weekly, and lock a “core set” that stays stable so you can see true movement instead of prompt churn.

| Cluster | Why it exists | Typical share in a balanced portfolio |

|---|---|---|

| Discovery | Surface your category story and definitions | 25–35% |

| Comparison | Expose decision criteria and competitive framing | 35–45% |

| Transactional | Measure qualified inclusion and objection friction | 20–30% |

Scoring decomposition: what to store so you can actually debug

Store more than a score: store inclusion labels (recommended, caveat, excluded), objection tags, cited URLs (or inferred source sets), and a short “framing snippet” that captures how the model describes you. When the number moves, this is how you learn whether you gained influence, lost trust, or simply got summarized differently.

Model updates and drift: how to avoid “false regressions”

When a major model update lands, the worst move is to panic-ship content based on one week of noise. Keep a frozen control set of prompts and run it across the same cadence so you can separate your changes from the ecosystem’s changes. If drift causes a drop, start by checking whether the retrieved source set changed. If the sources changed, your fix is source work. If the sources didn’t change, your fix is semantic density and grounding on your canonical targets. A tracker that treats drift as “rank dropped” will push you into random action; a tracker that labels drift as drift will keep you honest.

One practical trick we use in Lumonix Lab is to store a small “evidence snapshot” for each run: the top cited URLs (or inferred sources), plus the single sentence that caused inclusion or exclusion. When you compare snapshots week over week, drift becomes visible as a source shift or a framing shift, not as a mysterious score wobble. It also makes stakeholder reviews faster because you can point to evidence instead of arguing about charts.

FAQ

What’s the minimum “honest” AI tracking setup?

A prompt portfolio with variants, reruns, inclusion + framing labels, source mapping, and alerts for objection spikes and critical wrong claims. If you only have a blended score, you have reporting—not measurement.

When should we still care about classic rank tracking?

When the outcome is still a list of links and clicks. For generated answers, treat rank-like metaphors carefully. Use them only if they’re tied to a stable measurement definition and a fix path.

A question to debate

If your AI “rank” goes up but citations drift and objections rise, do you keep tracking the chart—or do you redesign the metric around sources and truth?